Hi everybody

I share a piece of code for reinforcement learning technic with 2 variables on only 3 historical of lookback…(very low)… It is horrible thinks without ARRAYS!!

Unfortunately prorealcode is very limited for such technics because without arrays it is very time consuming and very bigs codes…For this kind of things MQL4 (metatrader) is more powerful! … I’d like that developer people of prorealtime could put arrays too in next releases!! ! 😮

Do you have some ideas?

//reinforcement learning on 2 indicators (E1 and E2) and 4 periods of lookback (t)

//to calculate future rewards R and States S

//@ARNAUD DEGARDIN NOV.2017

// Definizione dei parametri del codice

DEFPARAM CumulateOrders = False // Posizioni cumulate disattivate

//parameters

SL=0.3

TP=0.5

per=150

bbfact=1

Rmin=0.1 //perc. min. rewards (var price in %)

// indicators

ATR5 = AverageTrueRange[5](close)

ATR20 = AverageTrueRange[20](close)

PEMA10 = Close/ExponentialAverage[10](close)

//LOOKBACK:

//states S Ei at t2

E12=ATR5[2]/ATR20[2]

BBUPE12=average[per](E12)-(- bbfact*std[per](E12) )

BBDOWNE12=average[per](E12)-bbfact*std[per](E12)

if E12<(BBUPE12-BBDOWNE12)/3 THEN

SE12=10

else

if(E12<(BBUPE12-BBDOWNE12)/3*2 AND E12>(BBUPE12-BBDOWNE12)/3) THEN

SE12=20

else

SE12=30

endif

endif

E22=PEMA10[2]

BBUPE22=average[per](E22)-(- bbfact*std[per](E22) )

BBDOWNE22=average[per](E22)-bbfact*std[per](E22)

if E22<(BBUPE22-BBDOWNE22)/3 THEN

SE22=1

else

if(E22<(BBUPE22-BBDOWNE22)/3*2 AND E22>(BBUPE22-BBDOWNE22)/3) THEN

SE22=2

else

SE22=3

endif

endif

//states S Ei at t3

E13=ATR5[3]/ATR20[3]

BBUPE13=average[per](E13)-(- bbfact*std[per](E13) )

BBDOWNE13=average[per](E13)-bbfact*std[per](E13)

if E13<(BBUPE13-BBDOWNE13)/3 THEN

SE13=10

else

if(E13<(BBUPE13-BBDOWNE13)/3*2 AND E13>(BBUPE13-BBDOWNE13)/3) THEN

SE13=20

else

SE13=30

endif

endif

E23=PEMA10[3]

BBUPE23=average[per](E23)-(- bbfact*std[per](E23) )

BBDOWNE23=average[per](E23)-bbfact*std[per](E23)

if E23<(BBUPE23-BBDOWNE23)/3 THEN

SE23=1

else

if(E23<(BBUPE23-BBDOWNE23)/3*2 AND E23>(BBUPE23-BBDOWNE23)/3) THEN

SE23=2

else

SE23=3

endif

endif

//states S Ei at t4

E14=ATR5[4]/ATR20[4]

BBUPE14=average[per](E14)-(- bbfact*std[per](E14) )

BBDOWNE14=average[per](E14)-bbfact*std[per](E14)

if E14<(BBUPE14-BBDOWNE14)/3 THEN

SE14=10

else

if(E14<(BBUPE14-BBDOWNE14)/3*2 AND E14>(BBUPE14-BBDOWNE14)/3) THEN

SE14=20

else

SE14=30

endif

endif

E24=PEMA10[4]

BBUPE24=average[per](E24)-(- bbfact*std[per](E24) )

BBDOWNE24=average[per](E24)-bbfact*std[per](E24)

if E24<(BBUPE24-BBDOWNE24)/3 THEN

SE24=1

else

if(E24<(BBUPE24-BBDOWNE24)/3*2 AND E24>(BBUPE24-BBDOWNE24)/3) THEN

SE24=2

else

SE24=3

endif

endif

//Attribute future Rewards for each States (shift prediction -1 period)

// rewards Rti

Rt1=(close[1]-close[2])/(close[2])

Rt2=(close[2]-close[3])/(close[3])

Rt3=(close[3]-close[4])/(close[4])

//S1=SE11+SE21 //not used here

S2=SE12+SE22

S3=SE13+SE23

S4=SE14+SE24

//definition of action based on State and FUTURE rewards

//Action on S2 - A2 : with future Rt1

IF Rt1 >Rmin/100 THEN

A2=1 //TO BUY ASSOCIATE TO S2

ELSE

IF Rt1 <-Rmin/100 THEN

A2=-1 //TO SELL ASSOCIATE TO S2

ELSE

A2=0

ENDIF

ENDIF

//Action on S3 - A3 : with future Rt2

IF Rt2 >Rmin/100 THEN

A3=1 //TO BUY ASSOCIATE TO S2

ELSE

IF Rt2 <-Rmin/100 THEN

A3=-1 //TO SELL ASSOCIATE TO S2

ELSE

A3=0

ENDIF

ENDIF

//Action on S4 - A4 : with future Rt3

IF Rt3 >Rmin/100 THEN

A4=1 //TO BUY ASSOCIATE TO S2

ELSE

IF Rt3 <-Rmin/100 THEN

A4=-1 //TO SELL ASSOCIATE TO S2

ELSE

A4=0

ENDIF

ENDIF

//ACTUAL state S at t0

E10=ATR5/ATR20

BBUPE10=average[per](E10)-(- bbfact*std[per](E10) )

BBDOWNE10=average[per](E10)-bbfact*std[per](E10)

if E10<(BBUPE10-BBDOWNE10)/3 THEN

SE10=10

else

if(E10<(BBUPE10-BBDOWNE10)/3*2 AND E10>(BBUPE10-BBDOWNE10)/3) THEN

SE10=20

else

SE10=30

endif

endif

E20=PEMA10

BBUPE20=average[per](E20)-(- bbfact*std[per](E20) )

BBDOWNE20=average[per](E20)-bbfact*std[per](E20)

if E20<(BBUPE20-BBDOWNE20)/3 THEN

SE20=1

else

if(E20<(BBUPE20-BBDOWNE20)/3*2 AND E20>(BBUPE20-BBDOWNE20)/3) THEN

SE20=2

else

SE20=3

endif

endif

//then actual Action definition - based on states and Actions matrix:

S0=SE10+SE20

IF S0=S2 THEN

A0=A2

ENDIF

IF S0=S3 THEN

A0=A3+A0

ENDIF

IF S0=S4 THEN

A0=A4+A0

ELSE

A0=0 //do nothing

ENDIF

//entry and exit logic

TOSELL= (A0<=-1)

TOBUY= (A0>=1)

IF TOSELL THEN

SELLSHORT 1 CONTRACT AT MARKET

ENDIF

IF TOBUY THEN

BUY 1 CONTRACT AT MARKET

ENDIF

IF SHORTONMARKET and TOBUY THEN

EXITSHORT AT MARKET

ENDIF

IF LONGONMARKET and TOSELL THEN

SELL AT MARKET

ENDIF

SET STOP %LOSS SL

SET TARGET %PROFIT TP

You are right, some things are difficult (or impossible) to code without Arrays capabilities. Because the programming language was thought of in these beginnings as simply as possible. Today and since the version 10 of prorealtime and the possibility of doing automatic trading, since also version 10.3 and the new graphic possibilities, the need of arrays tables is more and more felt, it is undeniable! I also hope that it will happen soon, I will try to know more, it happens so often to me to be blocked on a code .. 😐

About your code, I do not understand what you want to do exactly? Are there no possibilities to make it easier with a loop?

I love and can really appreciate what you are attempting here. Especially given the constraints presented by PRT’s lack of array support.

I must admit I find it difficult to entirely follow the logic in your code. Maybe you can add a few more descriptive comments in your code?

Anyway, I have attempted similar techniques using PRT in the form of Heuristics but as you say without arrays the code becomes very bulky very quickly.

Did you test the above on any specific instrument and timeframe?

Hi Nicolas, thanks for your reply…

It s frustrating because it could have big potential to save data in arrays, such as in my example…

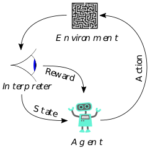

To explain the principle of reinforcement learning (see wikipedia image) and explaination:

The typical framing of a Reinforcement Learning (RL) scenario: an agent takes actions in an environment, which is interpreted into a reward and a representation of the state, which are fed back into the agent.

- You look the state S of precedent x periods of time and “code” the states by the use of indicators.

- ie. If you define 3 levels for each indicators, the state 312 for 3 variables a.b.c means A is in state 3, B in state 1 and C in state 2

- The reward R corrispond to the price increase in the future after the state (you can shift to 1 period or more)

- The Action A you define corrispond to BUY or SELL based on future rewards

- if more than 1 state have different rewards, you give a probability

So the arrays are useful because you need to feed a lot on variables for each states you want to make learn to the machine and multiply by the number of indicators to want to use… i.e. 4 indicators with 4 levels and lookback of 4 periods …means 4x4x4=64 variables to store in PRT code.. and compare to the actual state and predict the action to take

Hi

@juanj

Thanks for your comments above… I see it now, sorry!

Yes I try to test it on PRT but to code a reinforcement learning you need to lookback at at least 100-1000 periods. In the above code you can lookback only up to 3 periods…. so it is insufficient to compare the states with the current and make predictions…

I’m trying it with arrays in MQL4, if you know it, it seems more difficult but the code is much short by the use of array and loops:

(MQL4 code ONLY)

string symb1="GER30"; //used for calculation

double Rmin=0.05; //perc. min. rewards (var price in %)

int PeriodsLooback=200; //number of period lookback

int shiftR=3; //shift of reward calculation

//LOOKBACK arrays:

//arrays feeding of indicators - dim1: period number

double E1[100];

double E2[100];

double E3[100];

//states arrays definition - dim1: period number

double S1[100];

double S2[100];

double S3[100];

//global state code arrays definition - dim1: period number

double St[100];

//arrays feeding of rewards - dim1: period number

double Rt[100];

//arrays feeding of Actions - dim1: period number

double At[100];

At[0]=0; //first time definition

for(int i=0;i<PeriodsLooback;i++)

{

E1[i]=iATR(symb1,0,5,i)/iATR(symb1,0,20,i);

E2[i]=iClose(symb1,0,i)/iMA(symb1,0,10,0,MODE_SMMA,PRICE_MEDIAN,i);

E3[i]=iADX(symb1,0,5,PRICE_MEDIAN,MODE_MAIN,i)/100;

// states definition for 3 levels 1-3 of "ABC"

if (E1[i]<0.33) { S1[i]=100;}

else if(E1[i]>0.33 && E1[i]<0.66) { S1[i]=200;}

else if(E1[i]>0.66){ S1[i]=300;}

if (E2[i]<0.33) {S2[i]=10;}

else if(E2[i]>0.33 && E2[i]<0.66) {S2[i]=20;}

else if(E2[i]>0.66){S2[i]=30;}

if (E3[i]<0.33) {S3[i]=1;}

else if(E3[i]>0.33 && E3[i]<0.66) {S3[i]=2;}

else if(E3[i]>0.66){ S3[i]=3;}

//global state code calculation at t period

St[i]=S1[i]+S2[i]+S3[i];

//Attribute future Rewards for each States (shift prediction -2 period)###############

// rewards Rti calculation for future prediction

Rt[i]=(iClose(symb1,0,i)-iClose(symb1,0,i+shiftR))/iClose(symb1,0,i+shiftR);

//feeding actions based on States and FUTURE rewards###################

// ie.: Action at t=3 defined by Rewards at t=1 for 2 periods of shift

if (Rt[i] >Rmin/100) {At[i+shiftR]=1;} //TO BUY }

else if (Rt[i+shiftR] < -Rmin/100) {At[i+shiftR]=-1;} //TO SELL }

else { At[i+shiftR]=0 ;}

} //END LOOKBACK

//then actual Action definition : #################################

//LOOKING BACK FOR SAME STATES OF ACTUAL ONE

for(int x=1;x<PeriodsLooback;x++)

{

if (St[0]==St[x]) {

At[0]=At[x]+At[0]; //cumulate 1 or -1 actions to find prob.

}

}

//calculation of actual state

if(At[0]>=1)

{

LongEntryCondition = true;

}

if(At[0]<= -1)

{

ShortEntryCondition = true;

}

This code is not sure, use only in demo!

I like PRT because it is easy to backtest vs metatrader, so I hope it will possibile to run code like this with arrays in the closed future

I’m please to help the community and receive feedbacks!

… one another usefull function of MQL4 is that you can use different securities in your code (i.e. for spread calculation… ) and look different time frame also! 😉

I’d like to do it in PRT because it ‘s faster!